This is an old revision of the document!

Table of Contents

Project Name: Open vRouter Quickstart (OVR)

- Proposed name for the project: Open vRouter Quickstart

- Proposed name for the repository: ovrq

- Project Categories: Collaborative Development

Project Proposal Slides:

Project Description:

The Open vRouter Quickstart project in OPNFV is intended to allow users to explore a number of OPNFV use cases using a software stack that leverages overlay networking between virtual machines and uses a standards based control plane.

The software stack will be composed of the following software modules:

- OpenStack (Juno release)

- OpenContrail virtual networking controller and vRouter (Version 2.2)

- KVM/QEMU hypervisor (Ubuntu 14.04) and KVM (Ubuntu 14.04) with Docker (Version 1.6) containers

The software stack will be deployable in a variety of scales; from an all-in-one system that can run on a laptop, to larger scale multi-server deployments that more closely mimic production environments.

Documentation and scripts will be provided with the OVR software stack that will allow automated configuration of virtual machines and network policies that will illustrate how overlay networking can be applied in a number of use cases including:

- Multi-tenant infrastructure as a service

- Dynamic creation and application of network policy

- Creation of service chains and application of network policy to direct traffic through them

- Use of OpenStack Heat templates for application stack and service chain creation

- Load balancing in service chains, reverse flow symmetry, flow stability during scaling

- Use of KVM hypervisor and Docker containers for VNFs

- Flow mirroring to a virtualized packet analyzer

- Flow-based analytics on per-network, per-VM and per TCP port basis

- Path visualization for flows between VMs

- Infrastructure health monitoring

Additionally, gateway use cases will be supported using the Simple Gateway feature of OpenContrail or using a physical router if one is available. Instructions will be provided to enable VMs on virtual networks to be accessible externally using public IP addresses, and to demonstrate vCPE use cases using a simple virtualized firewall service.

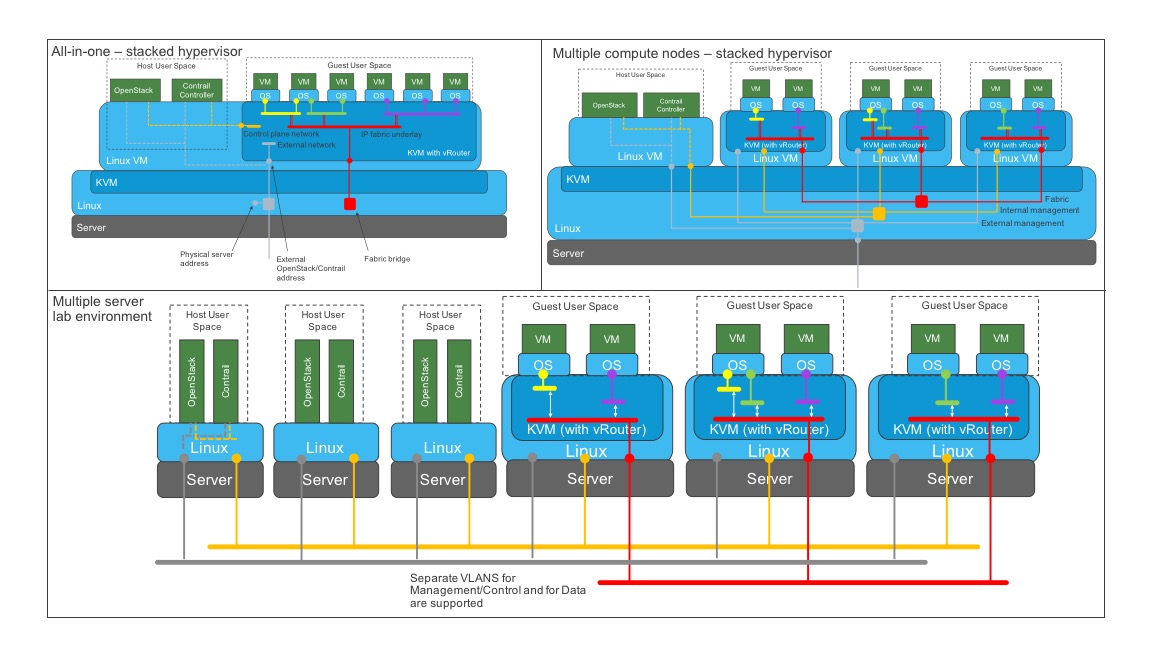

The primary goal of this project is to enable users to quickly bring up a working OPNVF system that employs overlay virtual networks, and to be able to step through working use case examples. Specific deployment scenarios will be supported in the images that will be supplied for download (i.e. the number of servers and their roles will be fixed for each scenario). Users will have the option to create their own deployment scenarios by following the documentation supplied with the packages contained in the stack. The deployment scenarios that will be supported by OVR Quickstart will be:

- Compact all-in-one – OpenStack and OpenContrail services run on a single operating system instance which is also a hypervisor on which guest virtual machines for client end points and network services are instantiated.

- Multi-hypervisor on hypervisor – Multiple compute nodes to allow inter-server flows to be observed, availability zones to be used.

- Multi-server – OpenStack and OpenContrail services run on a dedicated set of physical servers, and a configurable number of compute nodes are used for workloads. High availability and Ceph storage can be configured in this option.

The deployment options are illustrated below:

The first two deployment options will be deployed from snapshot images that can be loaded onto a physical server or into a hypervisor that supports stacked hypervisors e.g. KVM on physical servers or VMware Fusion on a laptop. The multi-server deployment option will be supported using scripts based on the fab utility (www.fabfile.org).

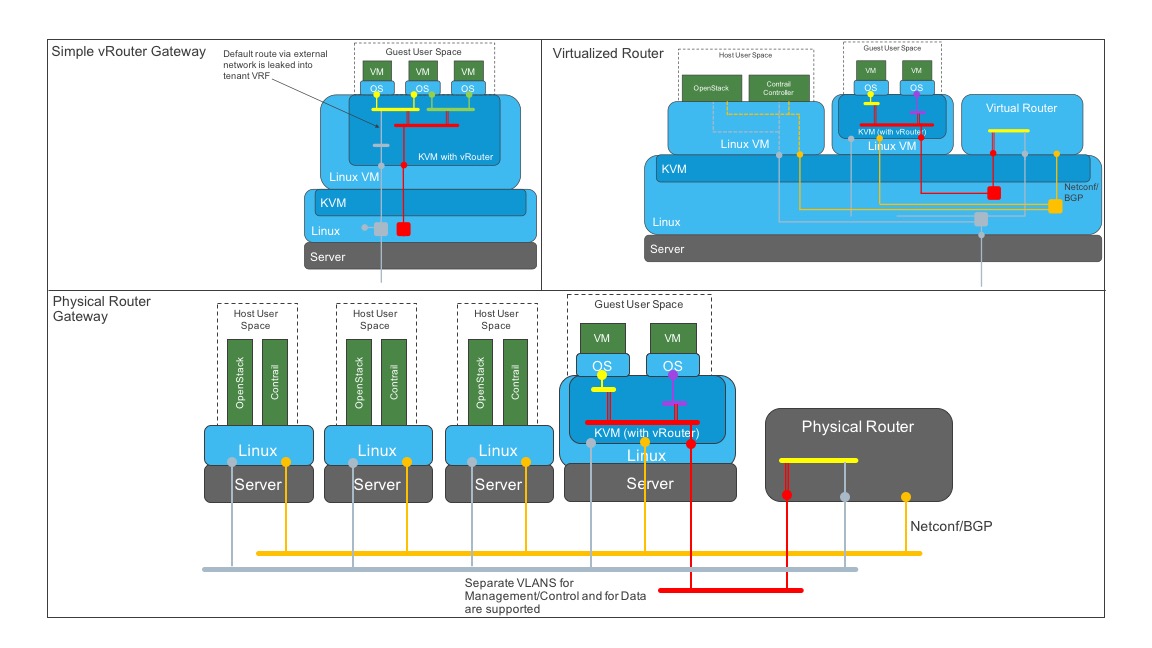

OVR Quickstart deployments can be connected to other networks using server gateway options, illustrated below:

The gateway options are:

- Simple gateway - vRouter is configured to leak routes from the fabric network into VRFs in order to allow access to the default gateway of the fabric network. The compute node has to be configured to support this feature during installation.

- Virtualized gateway - a virtualized router runs in a hypervisor (may be stacked, as shown, or on a separate server from Contrail compute nodes).

- Physical gateway - a physical router is used.

In the second and third cases, the router peers with the Contrail controller using BGP, and a VRF is configured on the gateway with a matching route target of a virtual network in Contrail.

The OVR Quickstart project will evolve from this initial standalone model and be integrated with the Octopus CI project and OSCAR deployment project as they mature.

Scope:

- The OVR Demo project is intended to allow users to quickly deploy OPNFV systems using the OpenStack/OpenContrail/KVM software stack which uses overlay networking (MPLSoGRE or VXLAN) for transport between virtual machines. The overlay networks support high scaling, together with traffic segmentation, and can be configure to implement service chaining.

- The project will support specific deployment models that align to requirements of users who want to quickly assess OVR stack features and set up lab environments.

Testability

- The specific deployment models described above will be tested before being images supporting them are made available as downloads on the OPNFV website.

Documentation

- Documentation will be provided to enable users to install the OVR stack according to the deployment models described above.

- Commented python scripts will be provided to populate the multi-server deployment scenario with networks, network policies, VMs and service chains that will allow the use cases to be demonstrated (the multi-hypervisor images will be pre-configured).

- Guides will be provided that will lead users through each of the use cases.

Dependencies:

This project has dependencies on its upstream projects

- OpenStack – Juno release

- OpenContrail 2.2

- KVM - Ubuntu 14.04

- Docker 1.6

Committers and Contributors:

Initial Project Lead:

- Stuart Mackie (Juniper Networks, wsmackie@juniper.net)

Committers

- Aqeel Asim (Intercloud Systems, aasim@intercloudsys.com)

- Konstantin Babenko (Intercloud Systems, kbabenko@intercloudsys.com)

Contributors

- Narinder Gupta (Canonical, narinder.gupta@canonical.com)

Planned Deliverables

- Packages to support deployment of all-in-one and multi-compute scenarios that will each contain a snapshot image of a complete, running OVR stack that can be installed on a hypervisor, together with documentation and configuration scripts.

- Package with an installer based on fab (www.fabfile.org) for creation of an OVR stack in a multi-server lab environment. A set of configuration files and scripts will be provided that will build a specific deployment architecture that will allow demonstration of high-availability, analytics collection, Ceph storage in addition to the use cases supported on the stacked hypervisor deployment scenarios.

Proposed Release Schedule:

- Initial release for all-in-one deployments will be May 30

- Initial release for multi-server deployment will be June 30