Table of Contents

Bottlenecks

- Proposed name for the project:

Automated Design Configuration Evaluation and Tune(Bottlenecks) - Proposed name for the repository:

bottlenecks - Project Categories:

- Integration & Testing

Project description:

This project aims to find system bottlenecks by testing and verifying OPNFV infrastructure in a staging environment before committing it to a production environment. Instead of debugging a deployment in production environment, an automatic method for executing benchmarks which plans to validate the deployment during staging is adopted.

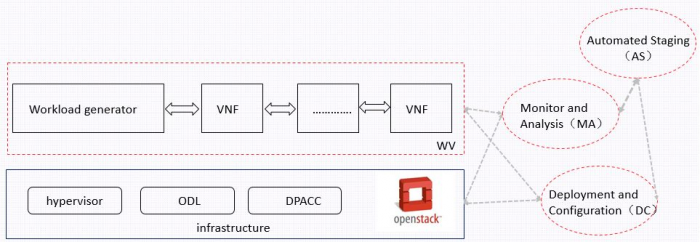

This project will provide a framework to find the bottlenecks of OPNFV infrastructure. The framework has four components,Workload generator and VNFs (WV), Monitor and Analysis (MA), Deployment and Configuration (DC), Automated Staging (AS), as shown below.

- Workload generator and VNFs: workload generator generates workloads which go through VNFs

- Monitor and Analysis: monitor VNFs status and infrastructure status to output analyzed results

- Deployment and Configuration: deploy and configure infrastructure and WV

- Automated Staging: implement automated staging

Scope:

The framework of bottlenecks project is focusing on bottlenecks of OPNFV infrastructure in terms of hardware and software. Bottlenecks can be divided into two categories coarse-grained bottlenecks and fine-grained bottlenecks.

- Forming a staging test framework

- Release candidates A,B… provides a foundation to be tested of Infrastructure layer

- A workload generator generates workloads which go through VNFs. This workload generator will be scalable and may cover multiply workload models for different scenarios

- Monitor and analysis units will monitor the infrastructure and VNFs status and present results after analysis

- Automatically generating the full set of experimental specification

- Document and codes to describe how to generate experimental specification according to different service level agreement (SLA)

- Document to describe how to find some typical bottlenecks according to specific monitoring

- Measuring the performance of standard benchmarks over a wide range of hardware and software configurations

- Different hardware resource adopted to produce test data used for bottleneck analysis

- Different parameters adopted in software configuration files to produce test data used for bottleneck analysis

- Automated iterative staging process for finding bottlenecks

- Achieve full automation in system deployment, evaluation, and evolution, by creating code generation tools to link the different steps of deployment, evaluation, reconfiguration, and redesign in full lifecycle.

- Reassignment and reconfiguration of hardware resources

- Reassignment and reconfiguration of software resource

Presentation

Testlabs:

- Reusing existing Infrastructure as much as possible

- Community Lab like Xian Lab besides LF and other labs may be used

- Tests be able to port across other labs

Testability:

- To be integrated in OPNFV Continuous Integration testing.

Tools:

- Load testing, TestON, ApacheBench, Curl-loader, Top, Operf, xenmon, zabbix, Ceilometer,etc

- to be continued

Dependencies:

- Installers in “BGS” provide the framework foundation to be tested in Infrastructure layer.

- Octopus provides the continuous integration test.

- Bottlenecks will consider the outputs of “Yardstick”, “Funtest”, “DPACC” and “Qtip”.

- Configuration methods of the upstream software, such as .conf and, json files

Committers and Contributors:

Project Leader

- Hongbo Tian (Huawei): hongbo.tianhongbo@huawei.com

Committers

- Hongbo Tian (Huawei): hongbo.tianhongbo@huawei.com

- Jun Li(Huawei): matthew.lijun@huawei.com

- Rui Luo(Huawei): larry.luorui@huawei.com

- Hao Pang(huawei):shamrock.pang@huawei.com

- Manuel Rebellon(sandvine):mrebellon@sandvine.com

- To be continued

Contributors

- Lynch Michael A(Intel): michael.a.lynch@intel.com

- To be continued

Planned Deliverables:

- Framework

- Test cases

- Diagrams which show the test results

- Reference documentation

- BP and codes for upstream

Proposed Release Schedule:

- Releases will be part of OPNFV release cycle

- Next release will be OPNFV R2 release

Key Project Facts

Project Name: Bottlenecks

Repo name: bottlenecks

Project Category: Integration & Testing

Lifecycle State: Proposal

Primary Contact: hongbo.tianhongbo@huawei.com

Project Lead: hongbo.tianhongbo@huawei.com

Jira Project Name: bottlenecks

Jira Project Prefix: BOTTLENECKS

Mailing List Tag: [bottlenecks]

Committers:

Hongbo Tian (Huawei): hongbo.tianhongbo@huawei.com

Jun Li(Huawei): matthew.lijun@huawei.com

Rui Luo(Huawei): larry.luorui@huawei.com

Hao Pang(huawei):shamrock.pang@huawei.com

Manuel Rebellon(sandvine):mrebellon@sandvine.com

To be continued

Link to TSC approval: N/A

Link to approval of additional submitters: N/A